Why Prioritization Frameworks Are Band-Aids Covering A Problem They’ll Never Solve

The fundamental mistake behind every scoring spreadsheet

Starting now, this newsletter is about the tough questions product teams face daily, and how strategy can help you answer them.

If your team is facing these questions, read to the end.

Think about the last time you celebrated a feature launch, only to realize four months later that it moved zero business metrics. How could this “high-priority” item that scored so well result in crickets?

RICE promised objectivity. Instead, it delivered feature bloat and the death of a promising product.

All prioritization frameworks share the same fundamental flaw. They exist because there’s a strategy gap at the top.

I learned this the hard way.

Playing prioritization roulette

Our team was scrappy and laser-focused on a small set of clear, user-centered choices, discovering, designing, and delivering a successful product launch.

But with that initial success, stakeholders from across the organization suddenly descended, each with a feature request they wanted shoehorned into our product.

To rate all these requests on some form of objective, level playing field, we turned to Intercom’s RICE framework. Reach, Impact, Confidence, Effort. Plug in the numbers, get a score, and build in order.

We hoped the fair, objective process behind our math would give stakeholders confidence in our roadmap choices.

The only thing we successfully prioritized was feature bloat

While we were busy scoring, triaging, and building out the flood of requests, our product began to change.

Over-engineered features our users had to be trained on never got used. Meanwhile, real customer pain points went unaddressed. And still, we kept stuffing more features in, trying in vain to satisfy every stakeholder’s insatiable demand.

Our technical, UX, and strategic debt mounted until our product collapsed under its own weight.

Leadership eventually pulled the plug.

What really happened?

We simply weren’t prepared for the flood of feature requests that came with our initial success.

We thought RICE would help us pick the right thing to build next and help us create the best collection of features.

That was the mistake.

As soon as we stopped focusing on our strategy and started playing the feature prioritization game, we were asking the wrong question.

Weaponizing priority

RICE promises to help product managers and their teams have richer backlog conversations with stakeholders. In practice, I’ve found it does the opposite.

Product teams hold up their score as “proof” they’ve done their due diligence. More often than not, the Highest Paid Person’s Opinion (“HiPPO”) decides.

The framework ultimately becomes a political weapon, as “Reach” and “Impact” scores are debated.

But no one ends up winning. Least of all, customers.

The layer problem

Here’s how product teams really create value:

Prioritization frameworks fail because they operate at the wrong level.

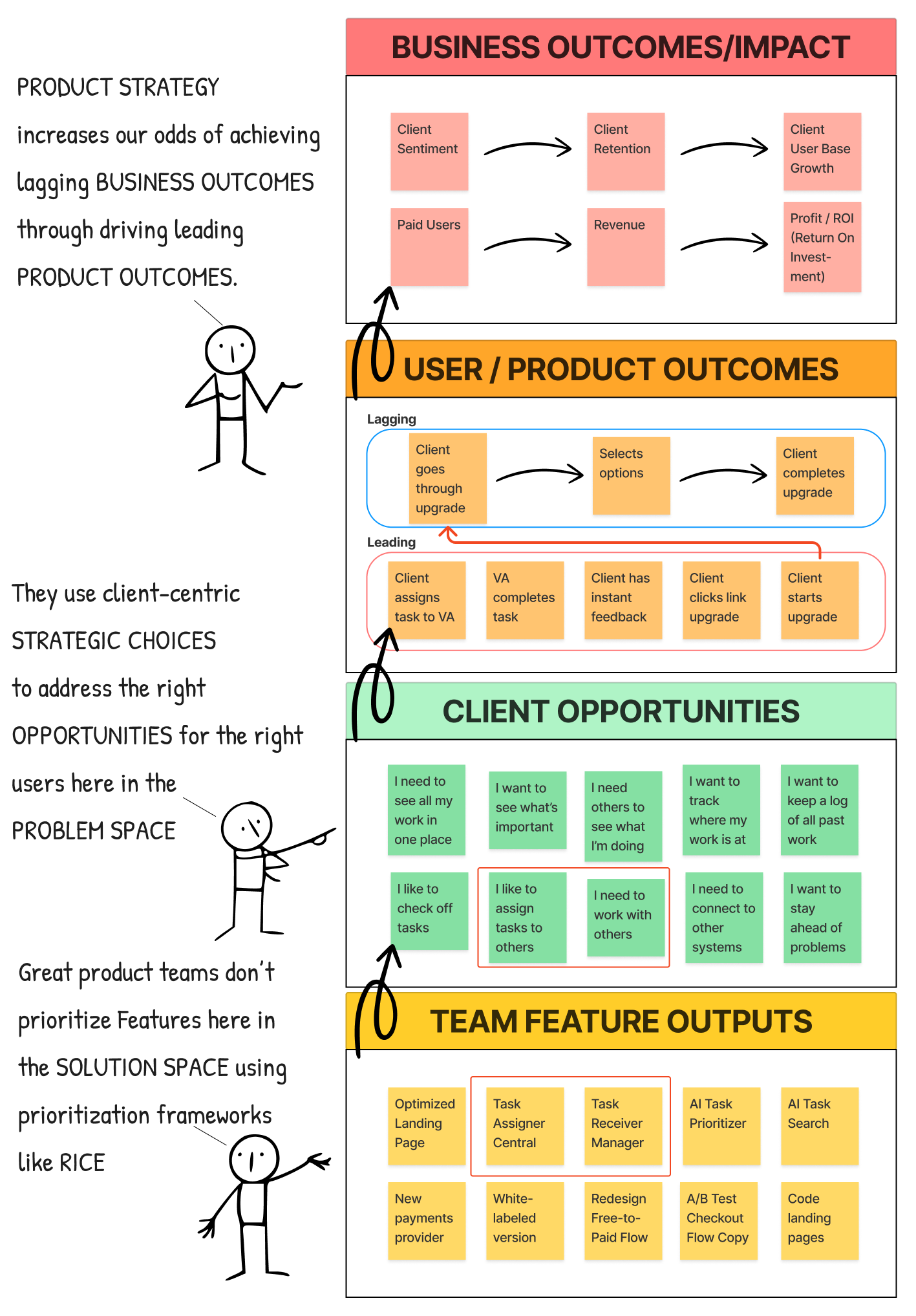

Product teams actually create value across four layers:

At the top: Business Outcomes. Revenue, retention, profit. The lagging indicators leadership cares about.

Below that: User/Product Outcomes. Leading changes in user behavior you can actually influence that ladder up to lagging actions they take in your product.

Below that: Customer Opportunities. Unmet needs expressed in your customers’ own words. “I need to see all my work in one place.” “I want to stay ahead of problems.”

At the bottom: Feature Outputs. The completed forms, pages, flows, and buttons you build.

RICE lives purely at the bottom layer. It can tell you which feature scores highest based on the unscientific, wild guesses you make from among the myriad options in your backlog and roadmap. It is blind to whether any of those features address an opportunity your customers actually have.

You can score a perfect 1,200 on a feature that solves a problem nobody cares about.

What great teams do instead

Great product teams start by making clear strategic choices about which opportunities to pursue for their chosen customers, and how they will solve for them.

This means asking different questions:

For which customers are we building this, so they can finally do what?

Which unmet need does this address?

How does this connect to our higher-level choices about where we play and how we win?

And, most importantly:

Which customers and opportunities do we choose not to address at this time?

When you’re working against a clear set of choices, what to build next becomes obvious.

You’re not bickering between competing features based on some very subjective and suspect math.

You’re focusing your team’s limited capacity on solving a specific problem for a specific customer in a way that advances your company’s overarching strategy.

Not What Is True. What Would Have to Be True?

Roger Martin calls this shift “What Would Have To Be True?”

Instead of debating which feature is right, you ask:

What would have to be true about our customers for this opportunity to matter?

Under what conditions would our capabilities be able to deliver against it?

Only by making this shift can you stop having opinion battles and start surfacing and testing logic and hidden assumptions.

Solving the real problem

81% of product managers believe strategy is core to their role. But only 25% work at companies that provide the clear strategic direction they need to lead their product teams.

That gap is where the pain lives. And no scoring formula will ever close it.

But you don’t have to wait for leadership to fix this. You can reverse-engineer your current strategy, surface the choices already being made, and design better ones at your level.

My Offer to You

I’m looking for a few Product Trios (PM, UX, and Tech leads) who are drowning in feature requests without strategic clarity.

You’ll walk away from our free 30-minute session with a clear picture of your team’s actual strategy and the first question you need to answer to fix it.

Reply CLARITY if that’s you.

I’ve seen this play out too, so yes, the framework is rarely the issue, it’s the absence of a shared strategic lens above it.

There’s solid evidence behind this. A 2023 McKinsey study found that 70 percent of product features built never materially impact customer or financial outcomes, despite passing prioritisation processes.

Scoring systems give comfort, not direction, and they often turn disagreement into arithmetic rather than resolving it.

When strategy is fuzzy, maths becomes a proxy for leadership decisions nobody wants to own.

The uncomfortable bit is that no framework can compensate for a lack of clarity on who the product is really for and what must not be built.

So the real question is, how do teams surface and resolve that strategic gap before they reach for another scoring model?

"If this is true what else is true" also I good war game